It is so easy to ignore simple tasks, pushing them off until the last second. However, in the modern technological age, we have adapted to the ease of technology. There is no need for manual directions, performing research using a physical encyclopedia, or mentally converting measurements, because all these tasks can be performed at a simple command. Even before the Chat-GPT boom in 2023, artificial intelligence (AI) has been in the world for longer than we think. As we continue to use and evolve with the capabilities of artificial intelligence, what are the real costs of these technologies?

Catherine Stinson, Queen’s University National Scholar in Philosophical Implications of Artificial Intelligence and Assistant Professor in the Philosophy Department and School of Computing at Queen’s University, gave a lecture funded by the Frank McKenna School of Politics, Philosophy, and Economics at Mt. A on January 29, What is AI Made Of? Unbeknownst to most who utilise AI, there is a large consequence of the expansion and continued use of modern AI. Stinson touched on a common perception of AI, that language — like the cloud — adds an atmosphere of immateriality. However, the cloud is tangible, and there are physical implications to our reliance on these massive servers to host our data. By breaking up the large and complicated elements of these massive technologies, we can better understand their impacts. Stinson discussed how the use of these AIs, although they seem ‘free’ and inconsequential, are associated with copious hazards. For example, the materials needed for these technologies require copious amounts of energy, coolants, and metals, which can be rare and derived from conflict.

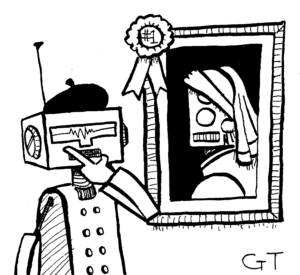

However, language models such as Chat-GPT are commonly used in academia, with there being concerns growing over the usage of Chat-GPT for papers and assignments constituting plagiarism. Mt. A, for instance, has an expansive policy on plagiarism, although, it does not specifically deem the usage of these AI language models for school assignments or work as a breach of academic integrity. Although there is a large component of automation in the inner workings of chat models like Chat-GPT, all its information has been taken from what has been gathered over time. It compares sources based on their reliability to derive an answer for a user’s question. Yet, it does not state how or why it chose this information, but most who use it choose to trust it anyways.

Although it may not like it, Chat-GPT and other upcoming AI models are not what they make themselves out to be. There are tangible elements behind these technologies, which drain limited resources and rely on exploitation and investor funding to remain free for the general public, until they end up being turned for a profit.

One Response

Great column. Well done.